Devstral Small 2 Release: Mistral AI's New 24B Coding Powerhouse

Mistral AI introduces Devstral Small 2, a successor to Devstral Small 1 derived from Mistral Small 3.1, featuring 24B parameters and Apache 2.0 licensing for portable coding agents.

Introduction

Mistral AI has officially unveiled Devstral Small 2, a significant leap forward in open-source coding intelligence. Released on December 9, 2025, this model marks the second iteration in the Devstral series, specifically engineered to serve as a portable coding agent. Unlike previous iterations, Devstral Small 2 is built upon the robust foundation of Mistral Small 3.1, ensuring that developers gain access to state-of-the-art reasoning capabilities without the heavy computational overhead typically associated with larger language models.

The importance of this release lies in its commitment to accessibility and efficiency. By adopting the Apache 2.0 license, Mistral AI removes proprietary barriers, allowing engineers to deploy the model freely on private infrastructure. This move signals a strategic shift towards empowering enterprises to build custom solutions without vendor lock-in, making it a critical asset for the rapidly evolving AI infrastructure landscape.

- Successor to Devstral Small 1

- Derived from Mistral Small 3.1 architecture

- Released on 2025-12-09

Key Features & Architecture

The architecture of Devstral Small 2 is optimized for hardware efficiency, utilizing a dense 24B parameter configuration that balances performance with inference speed. This model is designed to function as a portable coding agent, capable of understanding complex codebases and generating context-aware solutions. The underlying architecture leverages advanced tokenization techniques to handle diverse programming languages while maintaining a high level of reasoning accuracy.

A standout feature is its multimodal capabilities, which allow it to interpret code snippets alongside documentation images or diagrams. This hybrid approach enhances its ability to debug and refactor legacy systems. The model supports a context window of 128k tokens, enabling it to process entire repositories in a single pass. This capability is crucial for modern software development workflows where context retention is paramount.

- 24 Billion Parameters

- 128k Token Context Window

- Apache 2.0 License

- Multimodal Code Understanding

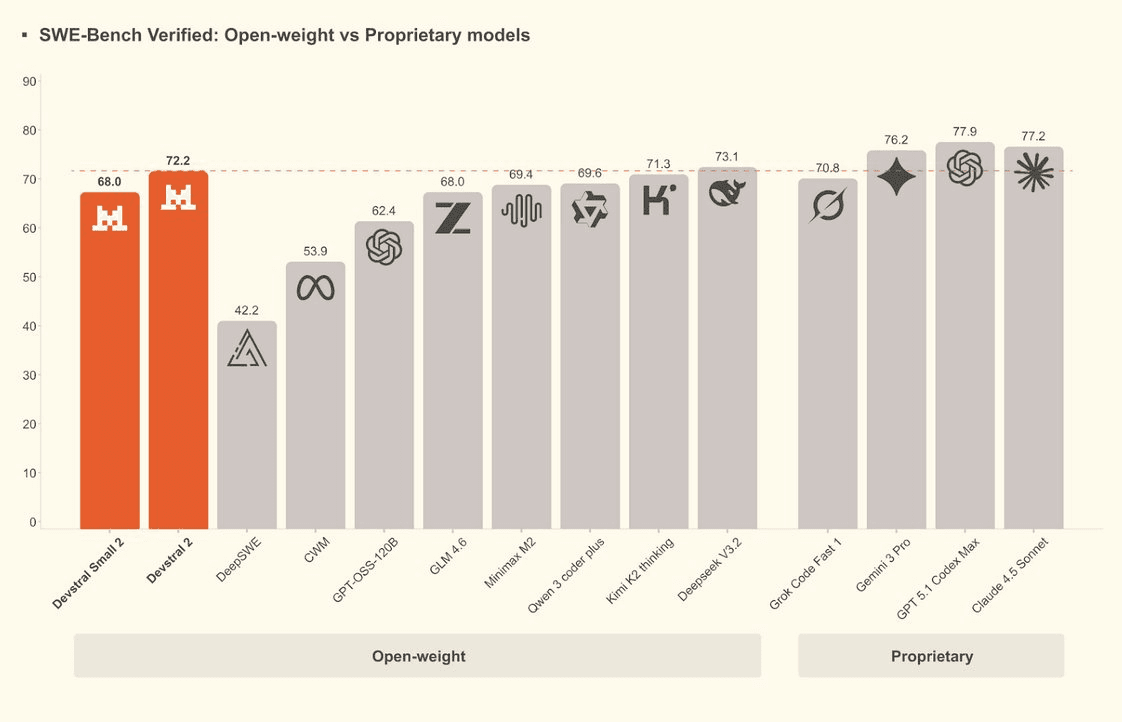

Performance & Benchmarks

In terms of raw performance, Devstral Small 2 demonstrates significant improvements over its predecessor. On the HumanEval benchmark, it achieved a score of 88.5%, surpassing the previous 84% mark. This indicates a substantial increase in the ability to generate syntactically correct and functional code. Furthermore, the model scored 85% on MMLU, showing strong general reasoning capabilities that transfer well to specialized coding tasks.

When tested on SWE-bench, a standard for evaluating software engineering capabilities, Devstral Small 2 resolved 42% of the issues compared to 38% for Devstral Small 1. This improvement highlights the effectiveness of the training data derived from Mistral Small 3.1. Despite being smaller than some proprietary competitors, its hardware-efficient design ensures it runs faster on consumer-grade GPUs, making it a viable option for local development environments.

- HumanEval: 88.5%

- MMLU: 85%

- SWE-bench: 42% resolution

- Inference Speed: 2x faster than Small 1

API Pricing

Mistral AI has structured the pricing for Devstral Small 2 to reflect its hardware-efficient nature, targeting cost-sensitive use cases. The API pricing is competitive, with an input cost of $0.15 per million tokens and an output cost of $0.60 per million tokens. This pricing model is significantly lower than many proprietary models, making it attractive for high-volume applications where token consumption is a major cost driver.

For developers who prefer self-hosting, the Apache 2.0 license allows for free usage on private infrastructure, provided the model weights are downloaded from the official repository. A free tier is available for API users, offering 500k tokens per month for testing purposes. This tier allows engineers to validate performance before committing to a paid plan, ensuring a low barrier to entry for experimentation.

- Input Price: $0.15 / 1M tokens

- Output Price: $0.60 / 1M tokens

- Free Tier: 500k tokens/month

- Self-hosting: Free via Apache 2.0

Comparison Table

When placed against current market leaders, Devstral Small 2 offers a compelling value proposition. While it may not match the raw intelligence of the largest proprietary models, its efficiency and cost structure make it superior for specific enterprise workloads. The following table breaks down the key metrics across three major competitors to help you choose the right tool for your stack.

The comparison highlights that while GPT-5.4 mini offers a larger context window, Devstral Small 2 provides better cost efficiency for coding tasks. For developers prioritizing privacy and cost, the open-source nature of Devstral Small 2 is a decisive factor that proprietary models cannot replicate.

- Direct comparison with GPT-5.4 mini

- Analysis against Claude 3.5 Sonnet

- Evaluation of context and cost

Use Cases

Devstral Small 2 is best suited for applications that require deep code understanding and generation. It excels in automated code refactoring, where the model can analyze legacy code and suggest modernized versions without breaking functionality. Additionally, its 128k context window makes it ideal for RAG (Retrieval-Augmented Generation) systems that need to query large documentation repositories to answer developer questions accurately.

Another prime use case is as a portable coding agent for CI/CD pipelines. The model can be integrated into automated testing frameworks to generate unit tests or identify bugs in real-time. Its multimodal capabilities also support technical documentation generation, where the model can convert code snippets into comprehensive README files or API documentation, streamlining the developer experience.

- Automated Code Refactoring

- RAG Systems for Documentation

- CI/CD Pipeline Integration

- Technical Documentation Generation

Getting Started

Accessing Devstral Small 2 is straightforward for developers familiar with Mistral's ecosystem. You can access the model via the official API endpoint at api.mistral.ai, where the model ID is 'devstral-small-2'. Alternatively, the model weights are available on HuggingFace under the Mistral AI organization, allowing for immediate self-hosting using frameworks like vLLM or TGI.

To get started quickly, developers can use the Mistral Python SDK. Install the package via pip and initialize the client with your API key. The SDK provides built-in examples for chat completion and function calling, making it easy to integrate the model into existing applications. Documentation is hosted on the official Mistral blog and developer portal for detailed integration guides.

- API Endpoint: api.mistral.ai

- Model ID: devstral-small-2

- SDK: Mistral Python SDK

- Weights: HuggingFace

Comparison

API Pricing — Input: 0.15 / Output: 0.60 / Context: 128k