Blog

Latest news, tutorials, and insights about AI

Latest news, tutorials, and insights about AI

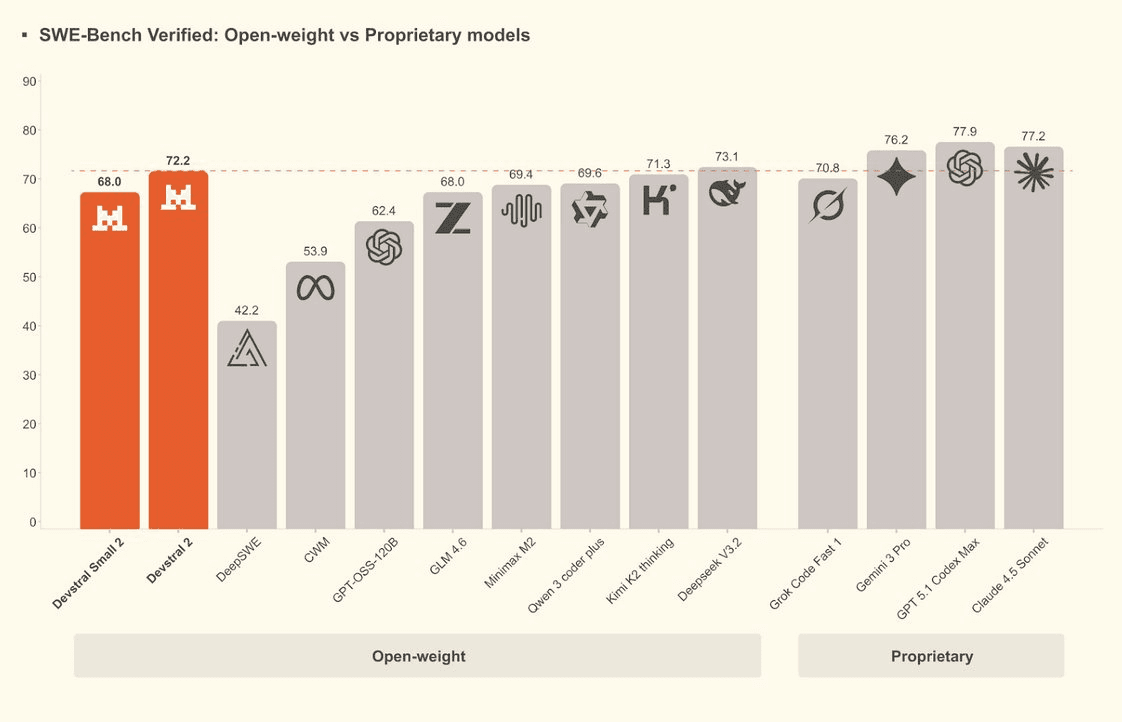

Mistral AI introduces Devstral Small 2, a successor to Devstral Small 1 derived from Mistral Small 3.1, featuring 24B parameters and Apache 2.0 licensing for portable coding agents.

Mistral AI releases Devstral 2, a 123B parameter open-source coding model with top-tier SWE-Bench performance and a revenue-based license.

Mistral AI releases Ministral 3 14B, an open-source multimodal model combining best-in-class vision and text capabilities under the Apache 2.0 license.

Mistral AI releases Ministral 3 8B, a powerful multimodal model with Apache 2.0 licensing that rivals frontier architectures.

Mistral AI's newest 3B parameter model brings vision capabilities to local edge devices under Apache 2.0. Perfect for phones and drones.

Amazon unveils Nova 2 at re:Invent 2025, bringing MoE architecture and multimodal capabilities to enterprise via Bedrock.

Mistral AI unveils Large 3, a 41B active parameter MoE model with open weights, challenging global leaders in reasoning and efficiency.

Zhipu AI releases GLM-4.7, an open-weights coding model topping leaderboards with a cost-effective Flash variant.

MiniMax releases M2.1, a 230B MoE coding model offering SWE-bench scores of 74% at 92% lower cost than Western competitors.